Use NVIDIA Cuda in Docker for Data Science Projects

Apr 11, 2022 · 2 Min Read · 9 Likes · 0 Comment

When I was working with data science project running in Docker, I found that working with huge data can be problematic. Even tools like PyArrow is not fast enough in Docker. But when I debugged the logs, I saw that if I could give it access to NVIDIA Cuda, then it would be much faster. In this article I will explain how you can add NVIDIA Cuda in Docker.

What is Cuda?

CUDA is a parallel computing platform and application programming interface that allows software to use certain types of graphics processing unit for general purpose processing, an approach called general-purpose computing on GPUs. (from Wikipedia)

Basically CUDA toolkit from NVIDIA will accelerate all GPU consuming apps. Hence the PyArrow performs better with CUDA enabled.

Make sure to have GPU Drivers installed

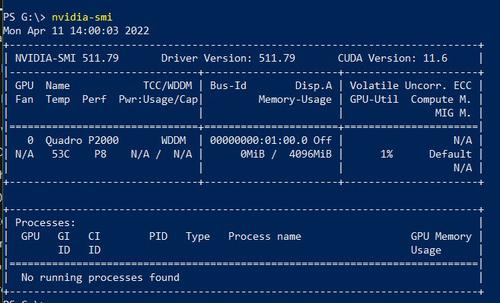

In your machine, make sure you have the GPU drivers installed. You can check this documentation on how to install drivers. Finally, check if the driver is installed by either terminal or powershell (in windows) by running the command nvidia-smi.

Add to Docker

I will put the Dockerfile code here, then put explanation for each step:

FROM nvidia/cuda:11.6.0-base-ubuntu20.04

WORKDIR /app

RUN apt update && \

apt install --no-install-recommends -y build-essential python3 python3-pip && \

apt clean && rm -rf /var/lib/apt/lists/*

COPY requirements.txt .

RUN pip install --no-cache-dir --upgrade -r requirements.txt

COPY . .

CMD ["whatever", "you", "want"]

Here are the explanation of the steps:

Step 1: We will be using the official Nvidia Cuda image based on Ubuntu. Step 2: Make /app as default directory. Step 3: We setup Python related and other necessary libraries, as well as add pip. Step 4: We copy the requirements to Docker. Step 5: Install requirements. Step 6: Copy the source from project directory to Docker. Step 7: Run the command to run your project, ie for django, it can be ['python', 'manage.py', 'runserver']

Conclusion

That is all, the steps above will allow you to use Cuda in your docker image. If you have any questions, feel free to ask them in comment section below.

References:

- Nvidia documentation: https://github.com/NVIDIA/nvidia-docker

- How to use NVIDIA GPU in docker: https://www.cloudsavvyit.com/14942/how-to-use-an-nvidia-gpu-with-docker-containers/

- How to properly use the GPU in docker: https://towardsdatascience.com/how-to-properly-use-the-gpu-within-a-docker-container-4c699c78c6d1

Last updated: Jun 05, 2026